JSWorld Conference 2022 Summary - 1 June 2022 - Part II

JSWorld Conference is the number one JavaScript Conference in the world, and I share a summary of all the talks with you. Part II

Table of contents

- (Recurring) Introduction

- A very important point

- Table of Content

- TL;DR

- The Edge at your Fingertips, literally!

- Building your brand using azure static webapps, one step at a time

- Designing high-performance react applications

- Serverless and deploying to the cloud from vscode

Welcome! As you may know, the second part of my JSWorld Conference 2022 summary series, in which I share a summary of all the talks in four parts with you.

You can read the first part here, where I summarized the first three talks:

- First Talk: Colin talks about the state of Deno in 2022, compares Deno with Node.js, and gives us a taste of working with Deno.

- Second Talk: Negar talks about how browsers render CSS, and then introduces us to CSS Houdini, which is a set of low-level APIs that exposes parts of the CSS engine.

- Third Talk: Dexter goes over his “perfect” stack, using graphQL, SvelteKit, Docker, and Github.

After this part, you can read the third part here, and the last part here, where I summarized the rest of the talks.

(Recurring) Introduction

After two and a half years, JSWorld and Vue Amsterdam Conference were back in Theater Amsterdam between 1 and 3 June, and I had the chance to attend this conference for the first time. I learned many things, met many wonderful people, spoke with great developers, and had a great time. On the first day the JSWorld Conference was held, and on the second and third days, the Vue Amsterdam.

The conference was full of information with great speakers, each of whom taught me something valuable. They all wanted to share their knowledge and information with other developers. So I thought it would be great if I could continue to share it and help others use it.

At first, I tried to share a few notes or slides, but I felt it was not good enough, at least not as good as what the speaker shared with me. so I decided to re-watch each speech, dive deeper into them, search, take notes and combine them with their slides and even their exact words in their speech and then share it with you so that what I share with you is at least at the same level as what I learned from them

Since I’m trying to include all the important points, I have to split each day into three, four, or maybe five parts to prevent the article from getting too long.

A very important point

Everything you read during these few articles is the result of the effort and time of the speaker itself, and I have only tried to learn them so that I can turn them into these articles. Even many of the sentences written in these articles are exactly what they said or what they wrote in Slides. This means if you learn something new, it is because of their efforts. (So if you see some misinformation blame them, not me, right? xD)

Last but not least, I don’t dig into every technical detail or live codings in some of the speeches. But if you are interested and need more information, let me know and I’ll try to write a more detailed article separately. Also, don’t forget to check out their Twitter/Linkedin.

Here you can find the program of the conference:

Table of Content

- The Edge at your Fingertips, literally!: Gift Egwuenu — Developer advocate at Cloudflare

- Building your brand using azure static webapps, one step at a time: Stacy Cashmore — Tech Explorer DevOps at Omniplan

- Designing high-performance react applications: Sendil Kumar - Senior software engineer at Uber

- Serverless and deploying to the cloud from vscode: Natalia Venditto - Principal program manager e2e JavaScript at Microsoft

TL;DR

- Fourth Talk: Gift begins with how servers have been managed in the past, continues with how it got better over time with serverless, and what can we expect with Cloudflare Edge services.

- Fifth Talk: Stacy talks about creating a personal brand (blog), using Angular, TypeScript, and static web apps in Azure, and eating the elephant one bite at a time.

- Sixth Talk: Sendil’s talk is about performance and why it matters. He gives some great tips to improve the performance of your app.

- Seventh Talk: Natalia talks about a new provisioning experience in Azure Developer CLI which goes Public preview at end of June.

The Edge at your Fingertips, literally!

Gift Egwuenu - Developer advocate at Cloudflare

In the not very distant past, we had to spin up our own server and manage a whole infrastructure just to get our application deployed and available to our users. Today's world is moving from on-premises to cloud deployments, and that has completely changed the picture.

In this talk, she explores the capabilities that the Cloudflare Edge network offers and how you can benefit from it to build and ship faster websites and applications.

What is the Edge?

The edge is a server geographically located close to your users.

Traditional servers

It all started with Traditional servers we maintained ourselves which were expensive to maintain, had high latency due to a large amount of traffic and it was hard to scale them.

Serverless functions

Serverless function is here to solve those problems.

Serverless doesn't mean there are no servers. There's still a server, you just don't manage it. Some of the providers are AWS, Cloudflare, IBM Cloud, Google Cloud, and Azure.

One of the advantages of using a serverless function is that it's hosted by a provider and there is no need to manage your servers. It is cheaper in terms of costs as well and you pay only for what you use. Scalability On-Demand is another advantage of using serverless functions.

But there are some limitations too. For example, all functions are stored in a centralized core location (like us-east1). Sometimes for users far from that server, there is a slow time to load your page or also experience latency.

Another limitation is cold start, which is the time it takes for your function to boot up after it has been idle for a while and it can take up to several minutes.

On the other hand, there is a Limitation in execution time, which makes writing more complex functions quite impossible.

Edge Functions

Edge functions are Serverless functions at the edge, and the edge is where ever your users are on Region Earth!

Some of the Edge Function providers are Cloudflare workers, AWS Lambda@Edge, Deno Deploy, and Netlify Edge Functions.

By using this service you face less Latency, zero cold starts, and improved Application performance.

On the other hand, you have a limited amount of CPU allocated to you to run your function on edge as well and at the moment you exceed that, that could easily cause your function to fail.

Another downside is that there is No support for the browser or Node.js-specific features (V8 Engine). Most of the providers are using different runtimes, for example, Cloudflare uses workers runtime, deno uses deno runtime, and you also see node.js runtime being used by some providers. The problem with this is that there is no support for browser functionality in some of these runtimes. For example, in there are some packages and libraries in Cloudflare that are supported by the Cloudflare runtime but you probably would not be able to use them in a node.js runtime. So there is no consistency between different providers.

The good news is Cloudflare alongside deno, node.js and a couple of other communities have come together to build something called WinterCG — mentioned in Deno Talk — to solve this problem.

If you are going to use edge functions you have to be aware of another limitation which is about managing data. Your function is deployed everywhere, but what about your database? Your function is deployed everywhere but you still have your database in, for example, us-east, now somebody trying to load your data on your website will still experience latency because of course your database is hosted somewhere else.

The Cloudflare Developer Ecosystem

Cloudflare Workers

Cloudflare Worker is a serverless execution environment that allows you to create new applications or augment existing ones without configuring or maintaining infrastructure.

There is no cold start (0ms worldwide) and helps with cost savings (100,00 requests/daily free).

Cloudflare does have its own workers runtime using the V8 Isolates engine.

| Virtual Machines | V8 Isolates |

| A virtual machine (VM) is a software-based computer that exists within another computer’s operating system. | Isolates are lightweight contexts that group variables with the code allowed to mutate them. |

| Consumes more memory. | Consumes less memory. |

| Start-up time 500ms- 10secs. | Start-up time under 5ms. |

A Hello worker example:

export default {

fetch() {

return new Response('Hello Worker!');

},

};

Pages Functions

Pages enable you to build full-stack applications by executing code on the Cloudflare network with help from Cloudflare Workers. This is a platform that allows you to deploy your site whether static or JAMStack.

In the past, if you wanted to have a dynamic website you had to have a Cloudflare pages application and you also had to have a workers application. Pages Functions is a solution to that.

Example of a function (Beta)

export async function onRequest(context) {

// Contents of context object

const {

request, // same as existing Worker API

env, // same as existing Worker API

params, // if filename includes [id] or [[path]]

waitUntil, // same as ctx.waitUntil in existing Worker API

next, // used for middleware or to fetch assets

data, // arbitrary space for passing data between middlewares

} = context;

return new Response("Hello, world!");

}

Workers KV

Workers KV is a global, low-latency, key-value data store, which allows you to catch your data on the edge. The downside is those data don’t have any state.

Durable Objects

Stateful data on the edge.

Durable Objects provide low-latency coordination and consistent storage for the Workers platform through two features:

- global uniqueness!

- transactional storage API.

export class DurableObject {

constructor(state, env) {}

async fetch(request) {

return Response("Hello World")

}

}

R2

R2 storage allows developers to store large amounts of unstructured data without the costly egress bandwidth fees associated with typical cloud storage services.

R2 BINDINGS:

# wrangler.toml

[[r2_buckets]]

binding = 'MY_BUCKET' # <~ valid JavaScript variable name

bucket_name = '<YOUR_BUCKET_NAME>'

D1

Cloudflare’s first SQL Database.

Common use cases

- Geolocation Based Redirects

- A/B Testing

- Custom HTTP Headers & Cookie Management

- User Authentication and Authorization

- Localization and Personalization

When not to use Cloudflare workers

- Data Latency — The database is not hosted on the Edge!

- Requires a large amount of computing resources to run.

Workers Examples

- Geolocation based redirects

export default {

async fetch(request: Request): Promise<Response> {

const { cf } = request

if ( cf?.country === "NL") {

return Response.redirect("https://asos.com/nl")

}

},

};

- Retry/Log failed requests

export default {

async fetch(request: Request): Promise<Response> {

const res = await fetch("https://httpstat.us/503?sleep=2000")

if (!res.ok) {

return await fetch("https://httpstat.us/200")

} else {

return res

}

}

}

- A/B Testing remote • origins

export default {

async fetch(request: Request): Promise<Response> {

const origin1 = 'https://cloudflare.com';

const origin2 = 'https://jsworldconference.com';

const randomNumber = Math.floor(Math.random() * 100) + 1;

if (randomNumber > 50) {

return fetch(origin1);

} else {

return fetch(origin2);

}

},

};

Building applications on the Edge helps bring us close to our end-users, improving their experience and making the web more accessible to everyone.

Building your brand using azure static webapps, one step at a time

Stacy Cashmore - Tech Explorer DevOps at Omniplan

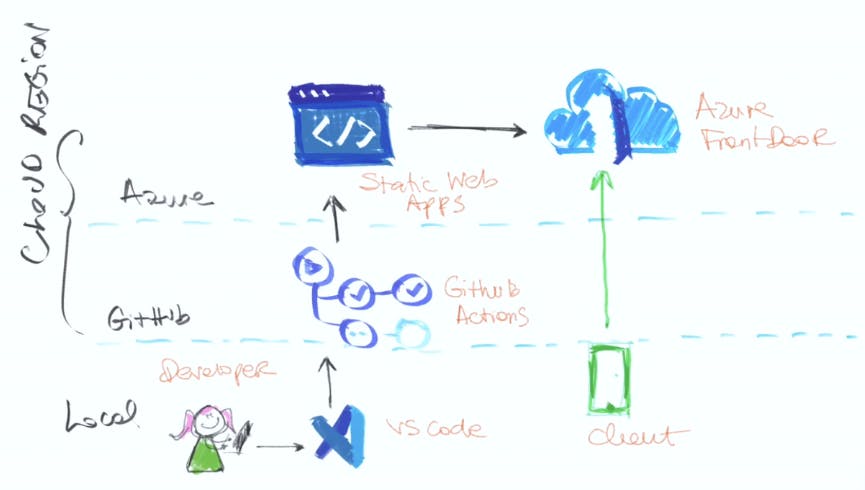

We're told that by using services such as Medium, dev.to etc that we are diluting our personal brand. That we should be posting to our own site and building ourselves up. But making that move can seem huge! Rather than eating the elephant - which can seem impossible - how about slowly moving to your own space? Build your brand whilst still using those great features that attracted you to your chosen platform in the first place. With using Angular, TypeScript and static web apps in Azure you no longer need to run web servers or App Services in order to host your site online. You can get set up in minutes! In this session she talked about how to get started: creating a Static Web App, deploying our code and bring in posts from Dev.To to own own site and how to eat the elephant one bite at a time to create your personal brand!

In her presentation she built a simple app using Angular and Azure Static Web App, that reads her blog posts from dev.to and put them on this web app.

That was a great Talk and got me thinking about building such a blog for myself, but not with the same tech stack. There is nothing wrong with her choice at all, it was a nice and easy-to-use stack, but I have some other ideas for my blog.

You can learn more about Azure Static Web Apps here:

What is Azure Static Web Apps?

But let me know If you need me to cover this talk on a deeper technical level.

Designing high-performance react applications

Sendil Kumar - Senior software engineer at Uber

Why does performance matter?

- 4.42% of users drop for every additional second of load time.

- Every 1s improvement increases CR (Conversion rate) by 2%. That means 100M = 200K ⏫

- Faster sites ⇒ Happier users ⇒ Higher CR

The ideal load time is around 3–4 s (source) but the average mobile site load time is around 15s (source)

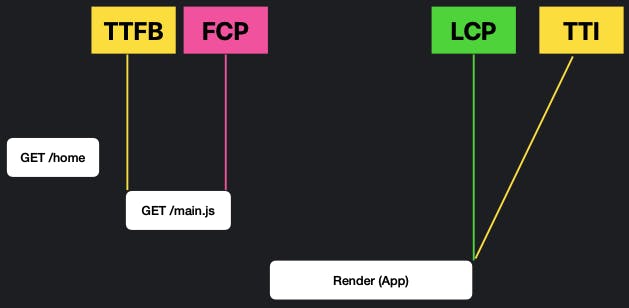

Before we jump into performance it’s very important to understand the web vitals. When the user wants to load your index.html, the browser makes a get Call:

- TTFB: Time To First Byte — The very first Byte that reaches from your server to the user, and that's the entry point of your application.

- FCP: First Contentful Paint

- LCP: Largest Contentful Paint

- TTI: Time To Interactivity — Here app is usable for users.

The time difference between FCP and LCP defines the performance of your app.

When you’re thinking about the performance and loading of your app, you have to think about Layout Shifts as well, because your app might change once it is loaded and might look different with every state change.

So Faster Largest Contentful Paint, Sooner Time To Interactivity, and Lesser Layout Shifts are what you are gonna target if you want to build a high-performance application.

Agenda

Obvious

Things that you know already!

Rendering

Render based on your needs

It depends

It depends on your usecase

Common

Use it everywhere

Obvious

Bundling & Splitting forms the basis of everything that we are doing with the help of bundlers like Webpack, Parcel, etc.

The main javascript file can contain the whole application or just a part of your application and you can determine that using bundlers.

The browser has to wait until the main.js is completely downloaded because there is no streaming compilation for JavaScript in the browser. So the smaller the size of this file, the better the performance.

Another way is to split it based on Route (Route based splitting) or component (Component-based splitting).

You also have to budget JavaScript that you are sending in - 100-170 KB is the ideal size for the JavaScript.

Take some time and analyze the JavaScript bundle size and try to Optimise it and make sure that what are you sending to the client is what the client needs.

When the app is loaded on the browser, how can you make it Faster or make it look like faster?

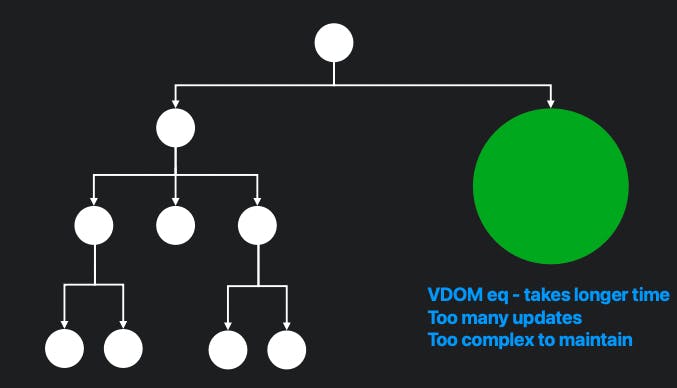

Try to Avoid Data mutation most of the time, because every mutation causes re-rendering, and whenever there is a re-rendering, it causes a performance impact.

Also, Immutability is your friend. It's essential to have immutable components and props and states, to make sure that’s easier for you to reduce the number of node reloads that are happening over there.

And think about this: Architect your code/application such that re-rendering affects lesser components in the tree.

We can also utilize the fact of Virtualization. Always worry about the things that you are gonna show in the viewport, and not about the things that you are not gonna show it or you gonna have it and show it later.

And finally, React 18 comes with Automatic batching. Batching is when React groups multiple state updates into a single re-render for better performance. Here are the advantages:

- Better performance

- No unnecessary re-renders

- Consistent User Experience

- No weird intermediate state

Rendering

Rendering helps you to achieve a gut performance. There are two types of rendering.

Client-Side Rendering (CSR)

It’s nothing but static rendering. In this world, server sends the HTML, CSS, and JS files and the browser just renders everything together.

It's very good for static components like header or footer or sidebar or anything you have that is much more static which you just load once and send to the users.

Pros and Cons:

- 👍 Fast Time To First Byte

- 👍 Good for static content

- 👎 Performance depends on the client. So if you are sending a lot of JavaScript, the browser on which the users work matters.

- 👎 TTI is high

- 👎 Bundle size decreases your site performance

Server-Side Rendering (SSR)

In SSR, the server generates HTML from components and sends it to the user.

- The first state is the loading state. It starts to load the app, then the server fetches the data that is required for the component to show, renders the HTML, and then sends it to the browser.

- The client receives HTML and starts to load it. But in this state, it’s only HTML and there are no interactions — Only HTML kind of interactions like links are allowed.

- The last state is Hydrate, and when it happens, the app becomes more and more interactive.

It’s essential to understand that it has to finish a state before it starts the next state. That means: Finish Fetching → Finish loading → Finish Hydrate → Interact

Pros and Cons

- 👍 Fast TTI

- 👍 Good for dynamic content

- 👍 Performance doesn’t depend on the client

- 👎 TTFB is high

- 👎 High server cost

You need to balance between SSR and CSR, None of them is always better.

React 18 - Suspense in SSR

<Suspense /> lets you wait for code to load.

Consider this is the structure of the components:

<Layout>

<Header />

<Sidebar />

<Main>

<!-- Post and Comments Components are server side rendered -->

<Post />

<Comments />

</Main>

<Footer />

</Layout>

For that to work, in the server, we have to fetch the post, and then for every post, we have to fetch the comments of that post and that takes a longer time.

fetchPosts().then(post => {

fetchCommentForPost(...)

});

But what we can do is wrap the Comments component inside the Suspense Component, and now we can fetch post separately, send that data to the client, then fetch comments for post and wait for this to load later:

<Layout>

<Header />

<Sidebar />

<Main>

<Post /> <!-- fetchPost(); -->

<Suspense>

<Comments /> <!-- fetchCommentsForPost(); -->

</Suspense>

</Main>

<Footer />

</Layout>

Which eliminates the need of finishing a state before starting the next one: Finish Fetching → Finish loading → Finish Hydrate → Interact

And This is what it looks like in frontend:

Here is a great resource for islands architecture that helps in this context:

It depends

React 18 - Server Components

Server Components are React components but they will run on the server, but it's not production-ready yet.

You can define two kinds of components. *.server.js which runs on the server and *.client.js which runs on the client, and you can also have the normal *.js components.

// blog.js

import marked from 'marked'; // 229 kb

import { date } from 'costly-date-lib'; // 954 kb

import { db } from './db'; // 32 kb

function Blog() {

return db.posts.getAll().map(post =>

<section>

<h1> { post.title } </h1>

<span> { date(post.date) } </span>

<article key={ post.id }>

{ marked(post.html) }

</article>

</section>

);

}

// 1215 kb

// Calling DB from the client

// Calculates them on the client

// blog.server.js

import marked from 'marked'; //

import { date } from 'costly-date-lib'; // Handled in server

import { db } from './db'; //

function Blog() {

return db.posts.getAll().map(post =>

<section>

<h1> { post.title } </h1>

<span> { date(post.date) } </span>

<article key={ post.id }>

{ marked(post.html) }

</article>

</section>

);

}

//SSR HTML

Pros and Cons:

- 👍 No huge dependencies

- 👍 Props are allowed

- 👍 Can import *.client.js

- 👎 No interactivity

- 👎 No State/user events

Use Technologies as needed

Try to not overcomplicate things. Don’t use Redux, Loadash, CSS in JS, etc. just because of the fancy name, think about it whether you need does.

Limit component size

Common

Cache is your friend

You have to enormously use that if you are using SSR, in the case of CSR most of the static components that you have, try to use caching there.

Try to put your caches across the globe using Edge. By doing that, the server will generate the HTML, send it over to the edge, and will be cached there, and then will be sent to the browser. For the next time, you don’t need to generate that HTML again and again, and the cached file is not so far from your user so there is no latency.

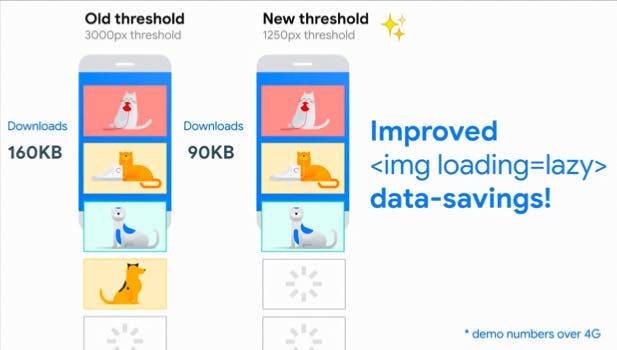

Image lazy loading

<img src=“image.png” loading=“lazy” alt=“...” />

Inline critical; defer non-critical

Inline all the things that are essential for your application to run, and defer the things that are not necessary for the users.

Remove unused resources

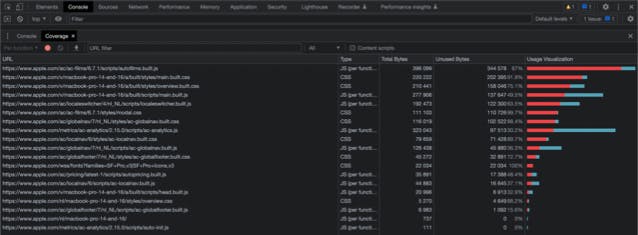

Even though you take most of the things about your application right, just go ahead and use this coverage tab on chrome, and you'll see around 1MB to 3MB of data is unused.

Also useful

- Preload

- Lazy load

- Minimize Roundtrips

Performance Optimisation is an ongoing process, so Measure! → Optimize!! → Measure!!!

Serverless and deploying to the cloud from vscode

Natalia Venditto - Principal program manager e2e JavaScript at Microsoft

Natalia leads the e2e developer experience for all javascript developers that deploy, build and play with azure.

What i do is i build staff all day long on azure. across many products i try to integrate, break it and stress it and make sure that when a JavaScript developer goes to azure they have what they need.

In her speech, she talks about a new provisioning experience in Azure Developer CLI which goes Public preview at end of June.

She decided to do a live demo but Unfortunately, the internet connection didn’t keep up and basically ruined her Speak. But I’m amazed by how she managed the stage and tried to give us something. She twitted later:

The key is, you are the speaker, you know your trade, you know your sh*t. Slides and demos are only supporting material, should not be central to all.

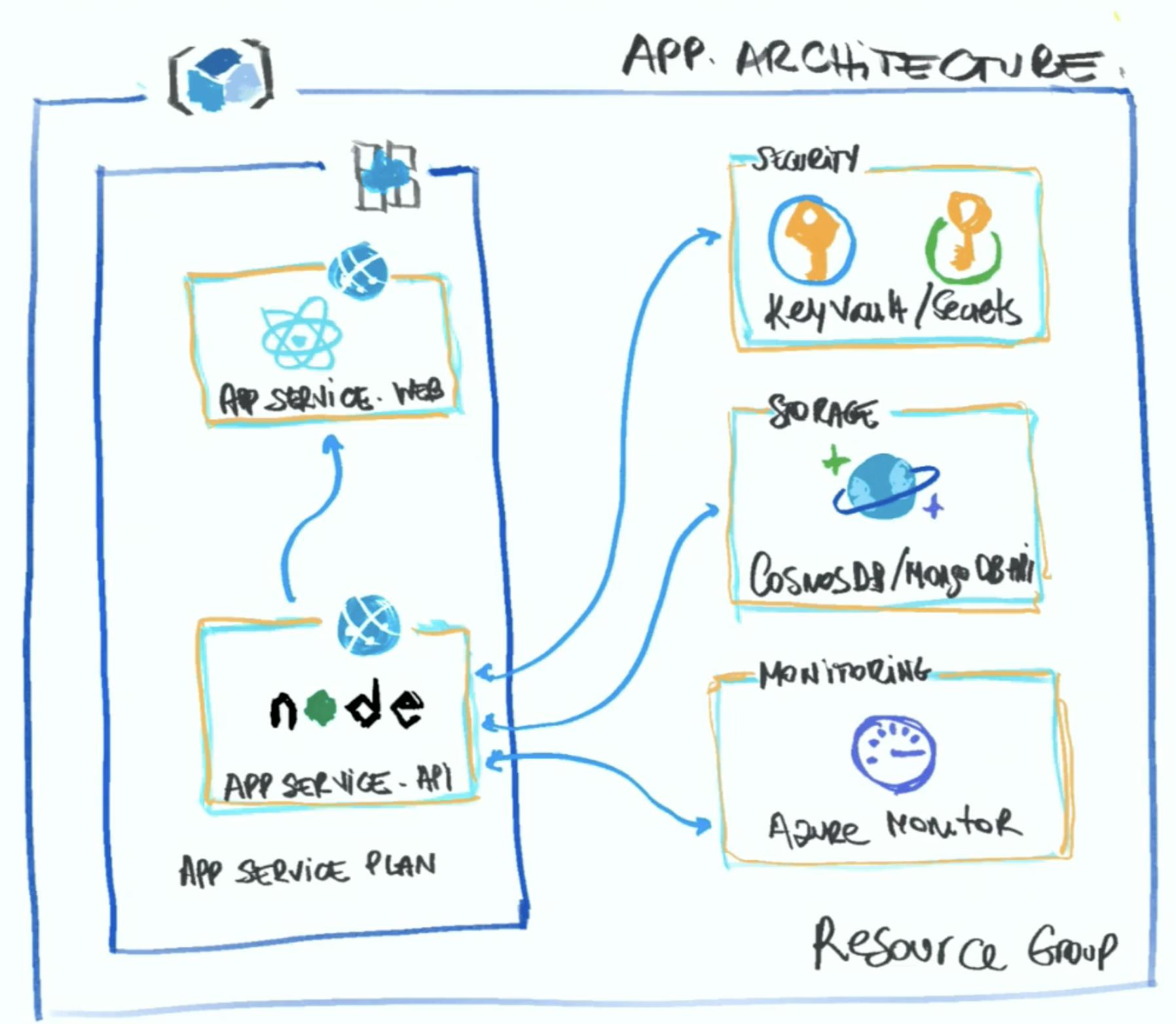

This is the architecture of an App she was building recently:

But when it’s time to deploy all those things into the cloud, and when we start having more complex applications, there are more integrations, or we get the requirement that it has to be super performant and accessible from all over the world with low latency, etc., you suddenly see things go wrong.

Now we have to do Provisioning which probably is not our favorite thing to do!

She showcased how easy it is to start provisioning all the resources with azd up command, which starts creating the azure resources and deploying them using a bicep file.

Bicep is a domain-specific language (DSL) that uses a declarative syntax to deploy Azure resources. It is type-safe and provides IntelliSense and has support for all the types of resources and APIs that exist in azure.

Nowadays if you try to integrate two products or two APIs or services, it sometimes feels like you are going down a road and trying to find a ramp and you never find it or when you find it you already passed it! If just a local setup is so complicated, when you have to deploy it to the cloud it becomes more complicated. And not all of us have the luxury of choosing the provider we want; sometimes it's a decision coming from above. We want to make sure that we have the same amazing experience all across the industry, that's why we have standards.

By using this tool we specify all the needed things in that bicep file and by running azd up command it will do all the necessary steps in the right order, It builds and deploys and publishes both the API and the web and you don’t have to do anything else.

End of Part II

I hope you enjoyed this part and it can be as valuable to you as it was to me.

You can read the last part here, or continue reading the third part and the last part, where I summarize the rest of the talks which are about:

- Eighth Talk: Max shares lessons he learned after dealing for 4 years with Micro-Frontend at DAZN.

- Ninth Talk: Eleftheria talks about UX and UI from the perspective of both users and developers and why you should care as a developer.

- Tenth Talk: Jemima takes a look at the humble beginnings of JS and how it exploded into the chimaera of frameworks and libraries that we have today

- Eleventh Talk: Gert Talks about upcoming Storybook 6.4, 6.5, and 7.0.

- Twelfth Talk: Wim’s talk is about the problems or difficulties that must be overcome to allow our app to move freely to the phones of our end users and give ourselves the necessities to keep this app running.

- Last Talk: Samuel’s talk is mostly about experience. What is the best developer experience? Are the answers in SDKs, Relations, Love, or Thunder?